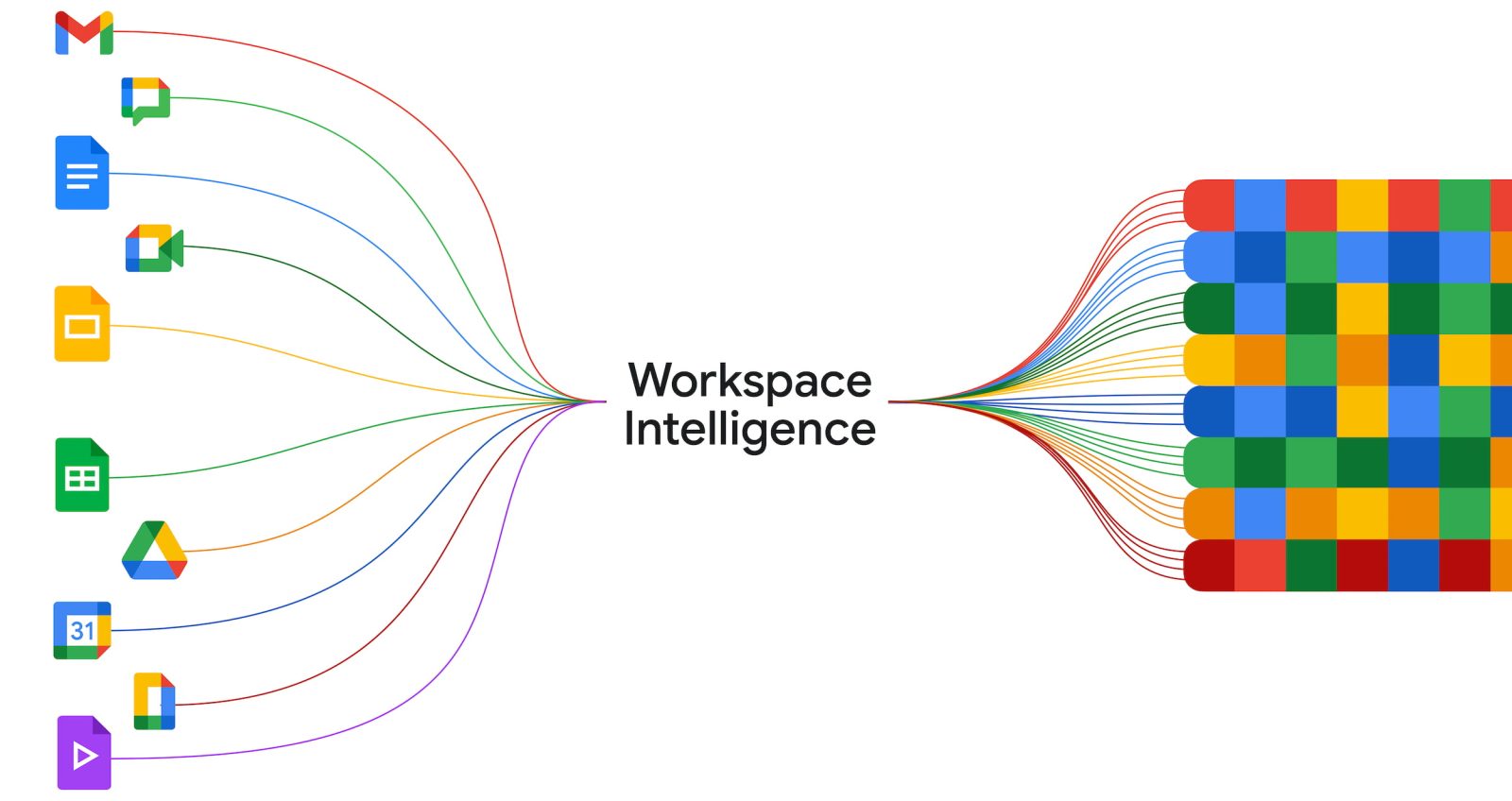

At Cloud Next 2026, Google today announced “Workspace Intelligence” to provide “highly accurate, personalized context for every app.”

This system “understands complex semantic relationships” between data in Gmail, Docs, and other Workspace apps, your active projects, collaborators, and other company-specific information. Workspace Intelligence uses Google’s search capabilities and advanced Gemini reasoning for:

- Information gathering: Workspace Intelligence handles the heavy lifting by gathering the right information for you. It breaks down context walls to ensure you have everything you need the moment you want to take action.

- Situational awareness: Using advanced Gemini reasoning, Workspace Intelligence knows what is most important to you right now — ensuring you never miss an action item.

- True personalization: By understanding your past work and communication patterns, Workspace Intelligence learns your unique work style, voice, and formatting preferences to ensure every output sounds authentically like you.

By leveraging the deep semantic context of your digital workflows that span across meeting notes, emails, files, and more, it creates an intelligence layer grounded in your unique context that can fundamentally change the way you work.

This background layer powers features like AI Inbox and AI Overviews in Gmail. Workspace Intelligence is also responsible for new capabilities like “Ask Gemini” in Google Chat. This dedicated conversation with Gemini is positioned as a “unified command line for all of your work.”

Simply state your goal, and Gemini will work behind the scenes to deliver the finished result directly into your chat.

Ask Gemini in Chat can accomplish complex tasks that you give it, including document and slide generation, file search based on descriptions, and finding the right times for meetings given everyone’s schedule. It can also create a daily briefing and integrate with third-party tools, like Asana, Jira, and Salesforce.

In Google Docs, Gemini can use Workspace Intelligence to create infographics “grounded in your business data.” It can edit multiple images simultaneously “to create visual consistency across your document.” Another capability can “triage and respond to comments in your documents, and even edit your document based on comment feedback.”

Gemini in Google Slides leverages Workspace Intelligence for context and strict adherence to “your company’s templates and visual styles” when generating slide decks in one shot. In Google Sheets, it’s used to conversationally build and edit spreadsheets.

Workspace Intelligence retrieves your relevant emails, chats, files, and information from the web to transform ideas into professionally formatted drafts that mimic your exact voice, brand, style, and company templates.

The decision to explicitly brand “Workspace Intelligence” — rather than just positioning the functionality as an extension of Gemini — is an interesting one. That said, this layer will ultimately work in the background and is something end users don’t have to be aware of.

Google also announced the eighth generation of Tensor Processing Units. Of note this year is the introduction of “two distinct, purpose-built architectures for training and inference.”

TPU 8t (left) is for training with the goal of reducing the “frontier model development cycle from months to weeks.” It offers 2.8x better price/performance than the last generation. Features include:

- Massive scale: A single TPU 8t superpod now scales to 9,600 chips and two petabytes of shared high bandwidth memory, with double the interchip bandwidth of the previous generation. This architecture delivers 121 ExaFlops of compute and allows the most complex models to leverage a single, massive pool of memory.

- Maximum utilization: By also integrating 10x faster storage access, combined with TPUDirect to pull data directly into the TPU, TPU 8t helps ensure maximum utilization of the end-to-end system.

- Near-linear scaling: Our new Virgo Network, combined with JAX and our Pathways software, means TPU 8t provides near-linear scaling for up to a million chips in a single logical cluster.

Meanwhile, TPU 8i (right) is for inference or running models. It provides 80% better performance-per-dollar than before, which Google says translates to companies being able to “serve nearly twice the customer volume at the same cost.”

- Breaking the “memory wall”: To stop processors from sitting idle, TPU 8i pairs 288 GB of high-bandwidth memory with 384 MB of on-chip SRAM — 3x more than the previous generation — keeping a model’s active working set entirely on-chip.

- Axion-powered efficiency: We doubled the physical CPU hosts per server, moving to our custom Axion Arm-based CPUs. By using a non-uniform memory architecture (NUMA) for isolation, we have optimized the full system for superior performance.

- Scaling MoE models: For modern Mixture of Expert (MoE) models, we doubled the Interconnect (ICI) bandwidth to 19.2 Tb/s. Our new Boardfly architecture reduces the maximum network diameter by more than 50%, ensuring the system works as one cohesive, low-latency unit.

- Eliminating lag: Our new on-chip Collectives Acceleration Engine (CAE) offloads global operations, reducing on-chip latency by up to 5x, minimizing lag.

Note: Google Cloud sponsored lodging costs, but had no input on editorial coverage.

FTC: We use income earning auto affiliate links. More.

Comments